Llama 3.1 Chat Template - When you receive a tool call response, use the. The included tokenizer will correctly format. Instantly share code, notes, and snippets. This model uses the following chat template and does not support a separate system prompt: If it doesn't exist, just reply directly in natural language. Llama 3.1 json tool calling chat template. Only reply with a tool call if the function exists in the library provided by the user. Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided.

If it doesn't exist, just reply directly in natural language. Llama 3.1 json tool calling chat template. Instantly share code, notes, and snippets. This model uses the following chat template and does not support a separate system prompt: Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. Only reply with a tool call if the function exists in the library provided by the user. When you receive a tool call response, use the. The included tokenizer will correctly format.

Llama 3.1 json tool calling chat template. The included tokenizer will correctly format. If it doesn't exist, just reply directly in natural language. Only reply with a tool call if the function exists in the library provided by the user. Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. This model uses the following chat template and does not support a separate system prompt: Instantly share code, notes, and snippets. When you receive a tool call response, use the.

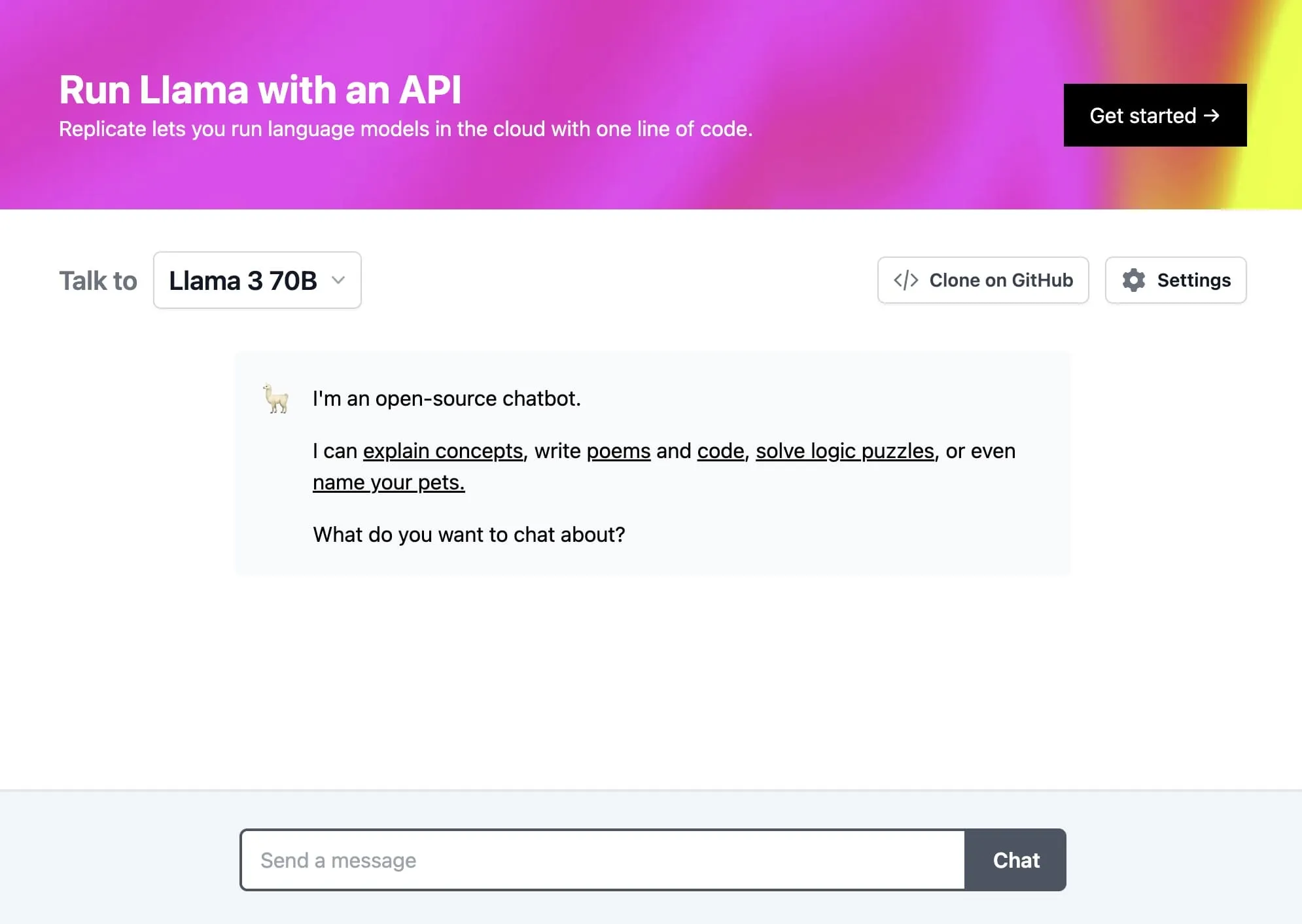

Chat with Meta Llama 3.1 on Replicate

The included tokenizer will correctly format. Llama 3.1 json tool calling chat template. Instantly share code, notes, and snippets. This model uses the following chat template and does not support a separate system prompt: Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided.

Llama AI Llama 3.1 Prompts

Llama 3.1 json tool calling chat template. This model uses the following chat template and does not support a separate system prompt: The included tokenizer will correctly format. Instantly share code, notes, and snippets. Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided.

Online Llama 3.1 405B Chat by Meta AI Reviews, Features, Pricing

Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. Instantly share code, notes, and snippets. This model uses the following chat template and does not support a separate system prompt: Llama 3.1 json tool calling chat template. When you receive a tool call response, use the.

antareepdey/Medical_chat_Llamachattemplate · Datasets at Hugging Face

If it doesn't exist, just reply directly in natural language. Instantly share code, notes, and snippets. The included tokenizer will correctly format. This model uses the following chat template and does not support a separate system prompt: Llama 3.1 json tool calling chat template.

llama3.1405b

The included tokenizer will correctly format. Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. If it doesn't exist, just reply directly in natural language. Llama 3.1 json tool calling chat template. When you receive a tool call response, use the.

Chat With Llama 3.1 Using Whisper a Hugging Face Space by candenizkocak

If it doesn't exist, just reply directly in natural language. The included tokenizer will correctly format. Llama 3.1 json tool calling chat template. Only reply with a tool call if the function exists in the library provided by the user. When you receive a tool call response, use the.

Run Meta Llama 3.1 405B with an API Replicate blog

Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. Llama 3.1 json tool calling chat template. The included tokenizer will correctly format. This model uses the following chat template and does not support a separate system prompt: Only reply with a tool call if the function exists.

Creating a RAG chatbot with Llama 3.1 can significantly enhance your

The included tokenizer will correctly format. If it doesn't exist, just reply directly in natural language. Only reply with a tool call if the function exists in the library provided by the user. Llama 3.1 json tool calling chat template. When you receive a tool call response, use the.

wangrice/ft_llama_chat_template · Hugging Face

Llama 3.1 json tool calling chat template. Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. If it doesn't exist, just reply directly in natural language. The included tokenizer will correctly format. This model uses the following chat template and does not support a separate system prompt:

Building a Chat Application with Ollama's Llama 3 Model Using

Instantly share code, notes, and snippets. The included tokenizer will correctly format. When you receive a tool call response, use the. Llama 3.1 json tool calling chat template. Only reply with a tool call if the function exists in the library provided by the user.

The Included Tokenizer Will Correctly Format.

Changes to the prompt format —such as eos tokens and the chat template—have been incorporated into the tokenizer configuration which is provided. Llama 3.1 json tool calling chat template. Only reply with a tool call if the function exists in the library provided by the user. When you receive a tool call response, use the.

Instantly Share Code, Notes, And Snippets.

If it doesn't exist, just reply directly in natural language. This model uses the following chat template and does not support a separate system prompt: